Levels of autonomy for AI agents

User roles and agent independence

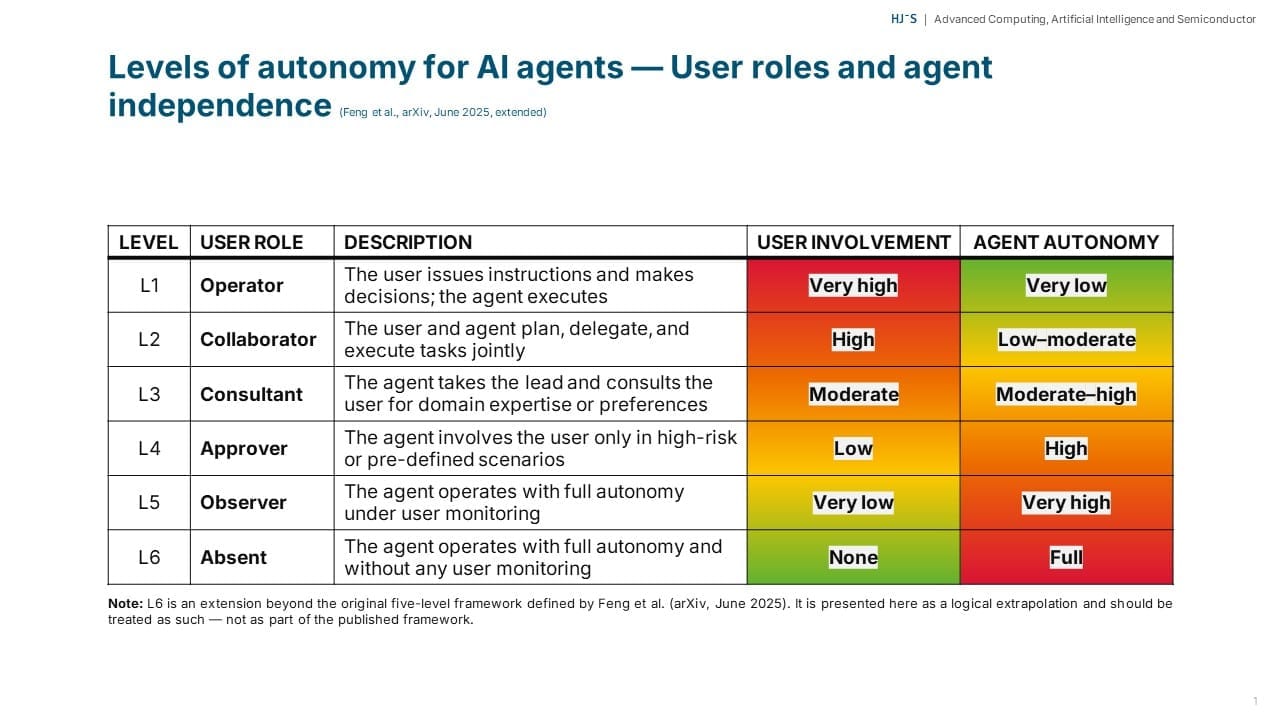

Based on my experience and discussions, agentic tools operate across autonomy levels from L1, where the user directs each step, to L5 or L6, where the agent functions almost independently. Most productive work currently occurs between L2 and L4, with users and agents collaborating on plans, the agent handling most tasks, and the user's role shifting toward decision approval.

At L4 (Approver), safety relies heavily on frequent permission prompts. What I consistently hear from users is that this creates approval fatigue: after dozens of requests, they stop reading carefully and approve automatically. In effect, the user's role silently shifts toward L5 (Observer) — the agent operates with high autonomy, but without meaningful oversight. The safety mechanism still exists in the interface, but no longer functions in practice.

Long-running sessions at L3-L5 autonomy often experience context degradation. As prompts, outputs, and partial plans accumulate, the agent's context window becomes overloaded. This leads to declining reasoning quality: the agent may lose track of objectives, repeat tasks, and make less coherent decisions. At higher autonomy levels, this issue is especially critical, as the agent relies on an increasingly outdated and noisy internal state.

In my observation, moving up the autonomy ladder without disciplined practices, such as clear boundaries for agent actions, regular context resets, and structured milestone reviews, risks creating a pseudo-L5 or L6 state. In this scenario, the agent operates with near-full autonomy; the user is nominally involved but largely disengaged; and both security and quality controls are significantly weaker than they appear.

What has been your experience with agentic tools? Have you observed similar patterns? How do you manage autonomy and oversight in your workflows? I welcome your insights in the comments.